Ethical AI: Who is responsible when things go wrong?

We need to decide what it means to be human before we invest too much power in machines, suggests a panel of technology and ethics experts. Chris Middleton listens in on an important debate.

One of the big questions facing society today is how to ensure that AI serves and benefits all humanity. A programme of ethical and responsible development could help prevent problems such as the automation of bias and discrimination, and the rise of inscrutable machines. But how can this be brought about?

One of the big questions facing society today is how to ensure that AI serves and benefits all humanity. A programme of ethical and responsible development could help prevent problems such as the automation of bias and discrimination, and the rise of inscrutable machines. But how can this be brought about?

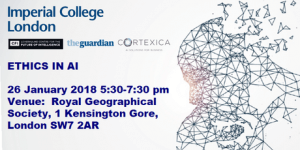

That was the subject of a public debate hosted by Imperial College, London, on 26 January 2018, among a panel of technologists and ethicists. The issues it raised will stand for decades to come.

Dr Joanna Bryson, who teaches AI ethics at the University of Bath, set out a key theme of the discussion: “I got into ethics when I noticed people being weird around robots, because I’m a psychologist. I now realise that the problem is this: we don’t understand ethics or what it means to be human.”

‘Being weird around robots’ refers to people wrongly perceiving machines to be human, and developing emotional responses to them, she suggested – a problem that has roots in a century of sci-fi lore, perhaps. As a result, many in the robotics and AI sector now favour the development of machines that stress their artificial nature, rather than present themselves as being ‘human’.

The human factor

As Bryson suggested, a critical challenge in defining what ethical AI development looks like is defining ethics themselves and understanding our own humanity. Part of that challenge lies in the lack of consensus across a whole range of issues in the human world, suggested Reema Patel, Programme Manager at the Royal Society for the Encouragement of Arts, Manufactures, and Commerce (RSA).

The RSA’s DeepMind partnership is exploring the role that citizens might play in developing ethical AI. Patel said: “Asking the public what they think isn’t just about commissioning an opinion poll. It really requires engaging with the uncertainty of the question. It really affects us all, our values, our choices, the trade-off that we make as a society. It’s so important to find new ways of engaging citizens in informed debate about the future of AI.”

This is because AI is starting to make decisions that would otherwise be made by humans, which adds new layers of ethical complexity. That’s where the RSA’s citizen outreach programmes could help, she said.

“One of the things we’re doing at the RSA is convening citizen juries: randomly selected groups of people, to deliberate on particular ethical issues. And we’re looking at the application of AI in the criminal justice system to better understand the parameters, and at the issue of how AI is influencing democratic debate.

“The reason for doing this is not just to find, ‘what does a citizen think?’ or, ‘what do people think off the top of their heads?’[…] Understanding what could create a moral consensus in any cultural and ethical context has to happen; we have to find new ways of doing that. So we’re prototyping, we’re experimenting. But in any space where there is no agreed moral consensus, the question must come back to the developer: is this a decision that an AI machine should be making?”

Good question: why are some people so keen to hand decision-making to machines?

Patel expressed her concern about AIs that “resemble human competency”, suggesting that this poses “really interesting questions about what it is to be human.” She shared the story of a colleague’s three-year-old child who had attempted to engage a smartphone in an emotional conversation, asking, “Siri: do you love me?”

Bryson suggested that some technologists could be using these types of emotional responses to AIs to abrogate their responsibility for flaws and errors in their systems.

“If you have a product that is dangerous, do you sell it today? And I feel like that because people are being fooled – they think intelligent means ‘person’, and so people are ready to say, ‘Siri: do you love me?’ – a lot of companies are trying to get out of what would, obviously, have been their responsibility of due diligence.”

Networks of intelligence

Professor Andrew Blake, Research Director at the Alan Turing Institute, suggested that it was the arrival of deep learning networks earlier this decade that brought questions about ethical development to a head.

“Deep learning networks are three times as effective in image recognition and three times as effective in speech recognition, but they are black boxes – even more so than previous technologies. If you have black boxes deciding the meaning of a word, then you don’t worry too much about understanding the rules, but if the same black box is deciding whether to give you credit or not at the bank, then you want to be able to challenge that, and it becomes much more important whether you can do that.”

But this challenge has inspired researchers, rather than hindered them, he said: “There is a lot of thinking going on about how you break open these black boxes and design them from the beginning to be ‘less black’, or if you can pair up the black box with a shadow system that is more transparent. The Turing Institute is all over this.”

However, Bath University’s Bryson cautioned that neither the existence of black-box solutions nor improvements in their development should encourage programmers to be complacent: “I don’t think deep learning is the end of responsibility. After all, you audit accounting departments without knowing how the humans’ synapses are connected!

“Even if deep learning was a complete black box, we could still put a ring around what it’s allowed to do. We’ve been dealing with much more complicated things than AI – people – for a long time.”

But the question remains: is there something fundamental about the nature of AI that sets it apart from any other type of software? Bryson responded: “First of all there is this question, what is intelligence? And I use a very simple definition, which is when you generate an action based on sensing. When you’re able to recognise a context and exploit it, or recognise a situation. But this is only one small part of what it is to be human.

“We aren’t building artificial humans, but we are increasing what we can act on and what we can sense. That’s what AI is doing. So there are two ways I would say that AI is different. One, we are able to perceive, using AI, things that we couldn’t perceive before. And companies and governments can perceive things about us, and we can perceive things about each other, as well as about ourselves.

“This isn’t just about discovering secrets, it’s also about discovering regularities that nobody knew existed before.”

“The other [way in which AI is different] is to do with how you set a system’s priorities so it’s not just passive. That’s what we call ‘autonomous’, when it acts without being told to act.”

Machines that learn

However, this uncovering of hidden ‘secrets’ about people is a controversial area, if for no other reason than an AI’s predictions may sometimes be wrong or untestable in the real world. Citizens may have no idea why they’ve been rejected for a job or for life insurance, for example, or why the police are knocking on their door.

A key ethical challenge lies in an increasingly important subset of AI: machine learning. While many AI systems themselves may be well designed, the training data that some use may contain unconscious human biases or assumptions. [For more on this, see this separate report.]

One example is the COMPAS algorithm that is already being used in the US justice system. Recent research by Propublica has suggested that COMPAS is replicating and automating the existing systemic human bias against black Americans when issuing sentencing advice, largely because that bias is inherent across decades of historic sentencing data.

Arguably, the problem in this application of AI is that there is no ‘year zero’ for clean data in any system that relies on precedent, as legal judgements do.

Imperial College’s Professor Maya Pantic developed the theme: “What’s worrying is the bias in the data. If the data is biased in any way, this bias will be picked up and it will be propagated through all of the AI’s decisions. For example, if jobs are always given to people from certain areas… then you will continue predicting that these people from those areas will be getting the jobs.

“We need to discuss this issue with the government. We need to have something like auditing of the software, because of lot of things can go wrong, especially if we cannot have explicit machine learning – systems that are open boxes, not black boxes.”

Bryson cautioned against over-simplifying the problem by suggesting that it is somehow unique to AI. But she acknowledged that a serious challenge in new product development today is organisations using AI to reignite behavioural wildfires, in effect, that human society had previously extinguished.

“I don’t think that the bias itself is different. What you are saying is that human culture creates biased artefacts, but that’s been true all along and AI is no different. The difference comes from this weird over-identification [that people have with machines].”

“Machine learning is one way we programme AI, but some people are using this ‘magic dust’ to go back to things that we have previously outlawed – like the persistence of stereotypes, such as who gets hired for what jobs.”

However, the risk surely remains that human beings will perceive AIs to be fair and ‘neutral’ simply because they are machines. Who programmed them, why, using what data, and to benefit whom, will be locked away in another black box, in effect.

Just as significantly, there is a systemic bias within the entire technology sector. In the UK, for example, 87 per cent of people in science, technology, engineering, and maths careers are male, and the figures are even worse among coders.

Imperial College’s Pantic said: “If you think about computer science, it’s white males. It’s ten per cent females, and an even lower percentage of non-white people. We shouldn’t use technology that is built by such a small minority of the population.”

God vs Tony Benn

The evening’s most pragmatic view came from an unusual source, in technology debate terms: the Rev Dr Malcolm Brown, who sits on the Archbishops’ Council of the Church of England. He said:

“The difference is authority, surely. If we treat the AI that has a bias built into it as somehow overruling our human judgement, then that’s different to, say, an HR director, whose actions can be challenged.

“I’m reminded of something that Tony Benn said should be asked of everyone in power: ‘What power do you have, who gave you that power, whose interests do you serve with that power, to whom are you accountable, and how can we get rid of you?’ These are interesting questions [that should be applied to AI].

“With AI, the advance that makes this problematic involves manifestations of power. Is that power in the hands of the people who create the AI, or is it in those of the user? Where do responsibility and accountability lie, and how do we change that if it goes wrong? These are the areas where we are floundering.”

Conclusions

It’s ironic that it took a man of God to raise the question of human authority and responsibility, especially when God could be described as the ultimate black box solution. Brown’s suggestion that we should be able to question who holds the power in any AI system, to whom they are accountable, and – crucially – how we can get rid of them, was the best idea on the table.

As AI has more and more power invested in it by human beings, having a robust mechanism in place that gives us clear answers to these questions is the most sensible, and human, response to any ‘magic dust’ strategy.

After all, private companies are accountable to their shareholders, and not to the general public, and this problem will become more significant as the use of AI spreads. For example, a financial institution might be tempted to use AI to rig a market or commit invisible fraud. Or take the insurance sector, which might see an advantage in making more and more members of the public uninsurable, through the use of predictive AI.

Let’s look at the latter example. Would any application of AI in this regard be legitimate, fair, or transparent? And what if the AI’s predictions are wrong? In that scenario, who would be accountable: the company, the person who commissioned the system, the person who set the parameters, the AI’s vendor, or its programmers? The likelihood that responsibility would be lost in an ethical fog is the real issue here.

Any deployment of black box systems exists in another black box; one in which authority, ethics, accountability, and responsibility are locked away and set aside.

This is why AI regulation and software auditing within a future evolution of GDPR could be the best available option. After common sense, of course. Arguably, any organisation that questions the need for such regulation is one that should have its own motivations and ethics examined.

Further reports by Chris Middleton on this topic:

AI’s About Face

The Bias Virus

The Good Machine

A.I: Friend or Foe?

A.I. Uber Alles?

• Author’s note: Some of the speakers’ unscripted comments have been edited for sense and grammar.

• For more articles on robotics, AI, and automation, go to the Robotics Expert page.

• A version of this article was first published on Diginomica.

![]() Enquiries

Enquiries

07986 009109

chris@chrismiddleton.company

If you copy or quote content from this report, please acknowledge the source. Thank you.